Google Is Testing Web Bot Auth: A New Way to Verify Bot Traffic

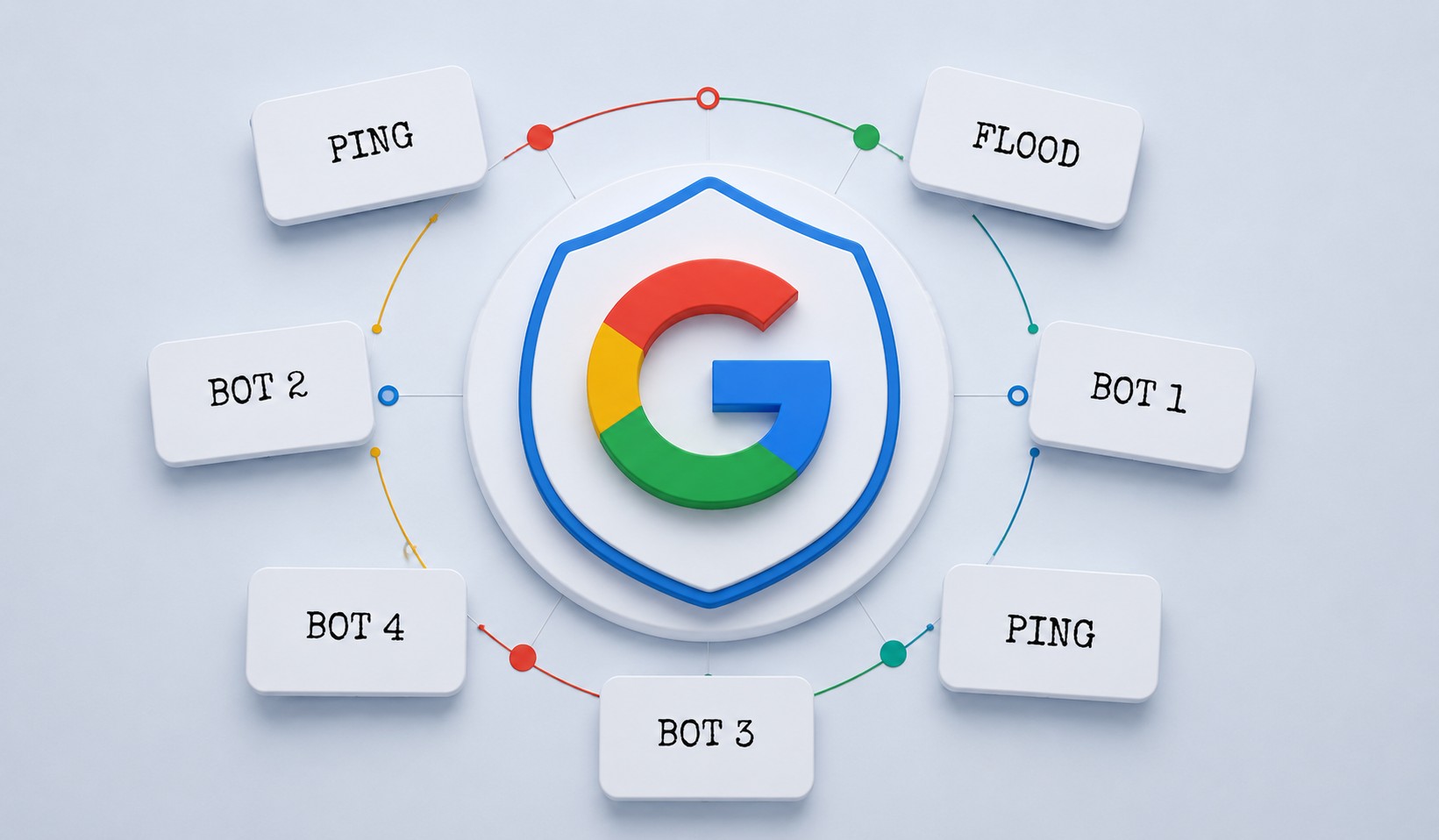

If you’ve ever stared at your server logs wondering whether that automated traffic is actually who it claims to be, Google may have something useful in the pipeline. The company is currently testing an experimental protocol called Web Bot Auth — a cryptographic verification system designed to help websites confirm that bot traffic is genuinely coming from the service it says it is.

It’s not a finished product yet, and Google is being upfront about that. But the direction it’s heading in is interesting, and the problem it’s trying to solve is very real. Rogue bots impersonating legitimate crawlers have been a headache for site owners for years. Web Bot Auth is Google’s attempt to give the web a better way of dealing with that.

What Is Web Bot Auth and How Does It Work?

At its core, Web Bot Auth is built on something called HTTP Message Signatures — a proposed technical standard for automating trust between web services. The idea is straightforward enough: instead of a bot simply declaring who it is through a user-agent string (which any rogue script can copy), it would need to cryptographically prove its identity.

Think of it like the difference between someone telling you their name at the door versus presenting a verified ID. Web Bot Auth moves bot identification from “self-reported” to “cryptographically verified.” A site receives an automated request, checks it against the service’s published cryptographic credentials, and either confirms or can’t confirm the identity. It doesn’t automatically decide who gets in — but it gives you a much more reliable signal to base that decision on.

The protocol works through a three-step discovery process. Keys are stored in a standardized JSON Web Key Set format that any server can read. They’re kept at a fixed, predictable location on the bot’s domain (the /.well-known/ directory). And each HTTP request carries a new Signature-Agent header that acts like a digital business card, pointing directly to the sender’s key directory. Clean, scalable, and doesn’t require manual setup between each website and each service.

Why Web Bot Auth Matters for Site Owners

The bot problem has gotten genuinely worse over the past couple of years. A Q1 2026 analysis found that nearly a third of all web traffic now comes from automated sources — bots, crawlers, and AI agents combined. Some of that traffic is legitimate. A lot of it isn’t. And the frustrating part is that current identification methods are trivially easy to spoof. Any bad actor can set their user-agent string to mimic Googlebot or any other trusted crawler.

We’ve already seen how messy this gets — the situation where Cloudflare had to block rogue AI crawlers for misrepresenting themselves and ignoring robots.txt is a good example of where things stand today. Web Bot Auth is trying to build something more durable than that — a system where verified identity is cryptographically provable, not just claimed.

For site owners, the practical upside is the ability to build more confident allowlists. Right now, deciding which bots to allow or block involves a fair amount of guesswork. Web Bot Auth could shift that from “we’re fairly sure this is Googlebot” to “we’ve verified this cryptographically.” That’s a meaningful difference, especially as AI agents and automated services multiply.

Web Bot Auth Is Still Experimental — Here’s What That Means Practically

Google has published a new developer support page explaining how to verify requests using Web Bot Auth, but it comes with some important caveats. Right now, the protocol only covers a subset of Google’s traffic — specifically Google-Agent, which is Google’s user-triggered AI agent. Not all Google user agents are signing their requests yet, and Google has been clear that a missing signature doesn’t mean a bot is rogue. It might just mean it hasn’t migrated to the new protocol.

“We recommend that in addition to Web Bot Auth you continue relying on IP addresses, reverse DNS, and user-agent strings as we gradually roll out signed traffic.” — Google

That’s good advice for now. Don’t ditch your existing verification stack just because Web Bot Auth is on the horizon. Use it alongside the tools you already have, not as a replacement for them. The protocol is a proposal that’s still subject to change, and Google is explicitly asking for feedback through its official Web Bot Auth feedback form as the standard develops.

Google has published official documentation for developers looking to get started – read Authenticate requests with Web Bot Auth (experimental) for the full technical details

What Web Bot Auth Does for Automated Services Too

It’s worth noting this isn’t just a tool for website owners. Automated services — legitimate crawlers, AI agents, and other bots — benefit from Web Bot Auth as well. One of the annoying realities for well-behaved automated services is that their traffic sometimes gets caught in the crossfire when sites tighten up bot controls. If your credentials change, verification can break. Web Bot Auth’s standardized key format means services can update their cryptographic details without suddenly becoming unrecognizable to every site they interact with. More consistency, less maintenance overhead.

Should You Do Anything About This Right Now?

Honestly? Not urgently. Web Bot Auth is experimental, the standard is still being finalized, and Google isn’t expecting site owners to overhaul their bot management setups today. What you should do is stay aware of it — especially if you’re running sites where bot traffic management is already part of your workflow.

If you want to get ahead of this, check whether your hosting provider plans to support the protocol — Google is actively encouraging site owners to ask that question. And if bot traffic monitoring isn’t already part of how you manage your site, now’s a good time to start. Our guide on managing AI bot traffic as part of your technical SEO audit covers exactly this — including how to use server logs and Cloudflare to monitor what’s hitting your site.

The broader picture here is that bot verification is heading toward a more structured, standards-based future. Web Bot Auth is one piece of that — and if it gets adopted widely, it could genuinely change how site owners think about automated traffic. Keep an eye on the Web Bot Auth Working Group for updates, and follow Google’s documentation as the experimental phase progresses.